Discussing Actual Intelligence with Dr Brett Kagan and Jenn Leung

Knowledge hub

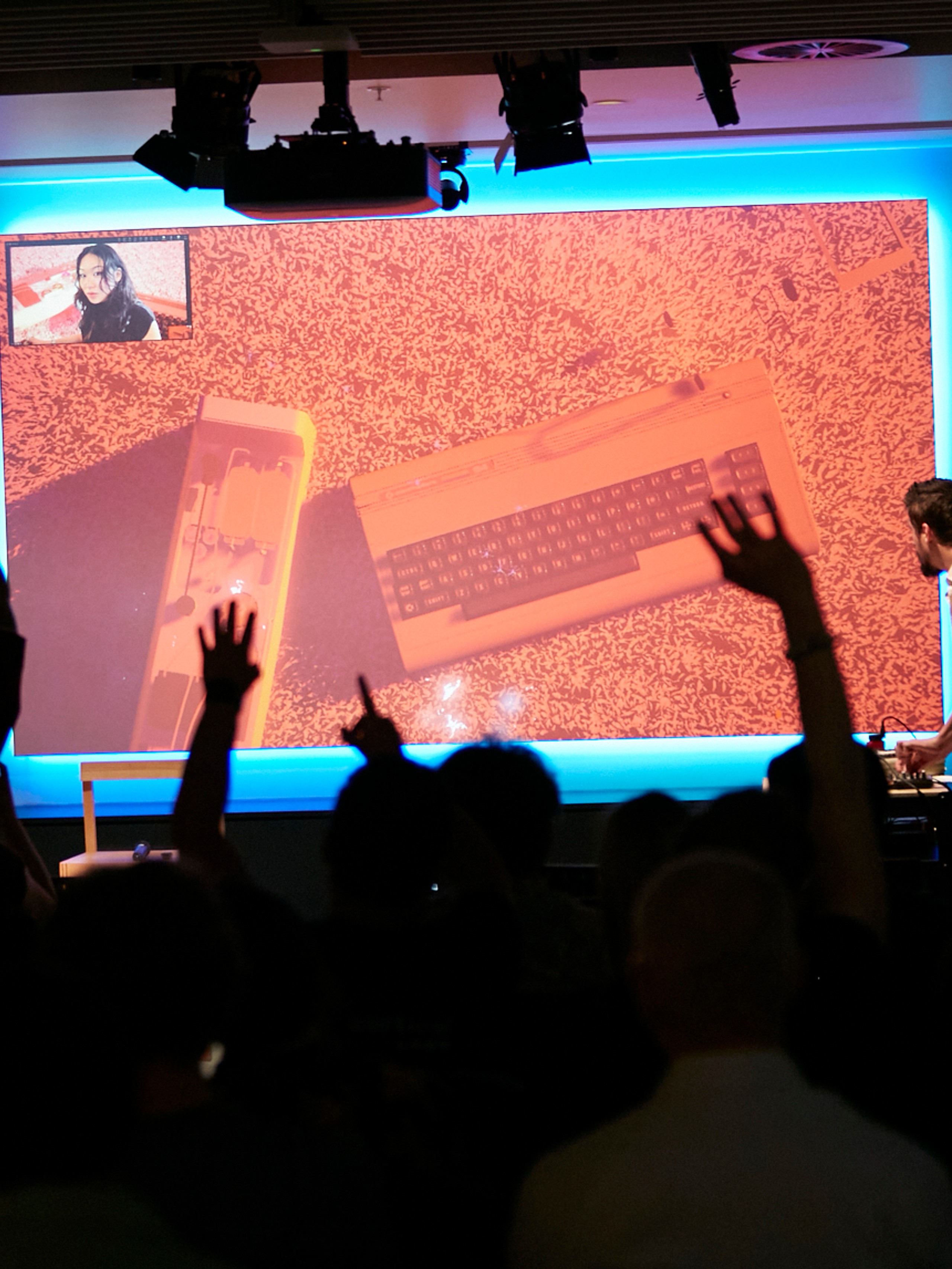

Actual Intelligence, NCM, 2026. Photo by Casey Horsfield.

Interview

Jemimah Widdicombe, Dr Brett Kagan, Jenn Leung • 18 Mar 2026

Cortical Labs is a Melbourne-based biocomputing startup exploring the development and applications of biological intelligence systems.

In February 2026, NCM hosted Actual Intelligence, a collaborative performance and panel discussion with Cortical Labs, featuring live neurons interfacing with a Commodore 64 to create music.

This interview took place in March 2026, between Dr Brett Kagan, Cortical Labs CEO and Chief Science Officer; Jenn Leung, creative technologist and researcher; and Jemimah Widdicombe, NCM’s Senior Curator.

Interview

Jemimah Widdicombe (JW): Jenn, your work connects biological intelligence, gaming engines and speculative research. What led you to working with Cortical Labs and NCM?

Jenn Leung (JL): I’ve always had an interest in unconventional computing and was reading up on reservoir computing around 2021 and what types of computation were possible. During this time, I was also working as a game developer and technical artist – so had to experiment with a range of streaming, game agent behavior tree, to learn about how to create interesting simulations. These budding interests allowed me to be curious about the new forms of technology in synthetic biology/computing beyond silicon-based computers!

In 2024, I was working as an AI researcher at Antikythera, an R+D institute funded by the Berggruen Institute. At the time we were briefed to research different forms of cognitive infrastructures and synthetic intelligences, and it was then we came across the DishBrain project as a really important point of departure for the future of biocomputing.

It was then me and my collaborators Chloe Loewith and Ivar Frisch worked on a couple of papers together — Organoid Array Computing: The Design Space of Organoid Intelligence and Assembloid Agency: Unreal Engine API for brain-on-a-chip platforms — through which we got to deepen our understanding of biocomputing with living neurons. My background in game development and real-time streaming became extremely helpful for working with these adaptive systems as it relied primarily on low-latency closed-loop interactions between a living system and a game environment.

Meanwhile, I was exploring using spiking neural network simulators to simulate spikes, or using EEG to stream spiking activity to Unreal Engine to do reinforcement learning within the game environment… it was during this time a friend encouraged me to reach out to Cortical Labs! It’s been incredible to see Cortical Labs reach so many people with their Dishbrain and Doom projects!

JW: Brett, Cortical Labs describes one of its core innovations as Synthetic Biological Intelligence, which is lab-grown human neurons integrated with silicon. In simple terms, what is SBI, and how does it differ from Artificial Intelligence?

Brett Kagan (BK): AI is software inspired by the brain, while Synthetic Biological Intelligence (SBI) is real brain cells working with computers. It’s less like building a smarter program and more like growing a new kind of computing system. And if we get it right, it could open the door to powerful new ways of studying the brain, developing medicines, and creating energy-efficient intelligent machines.

Artificial Intelligence, or AI, runs entirely in software. It uses mathematical models—like neural networks—that are inspired by the brain but ultimately run on traditional silicon chips. These systems learn by adjusting numbers in code and usually require huge datasets and a lot of energy to train.

SBI is different because the learning system is literally biological. In SBI, real human neurons (typically derived from human induced pluripotent stem cells) are grown in a lab and connected to electronic hardware. The hardware sends signals to the neurons and reads their activity back. Over time, the neurons adapt and reorganise themselves, which allows the system to learn from experience.

AI is software inspired by the brain, while Synthetic Biological Intelligence (SBI) is real brain cells working with computers. It’s less like building a smarter program and more like growing a new kind of computing system.

JW: Jenn, step us through the thinking and process of making Closed Loop Connections. What questions in your own practice did this collaboration allow you to explore?

JL: I find the networking of living cells and scaffolding them into other systems the most interesting direction for research. For me, using the spiking activities to drive game states was particularly appealing, as it leads to the potential for multiplayer communication and games.

Closed Loop Connections was a first stab at connecting the CL1 to a range of expansive creative technology applications using living neurons as real-time processors. These included processing human movement tracking data from TouchDesigner, entering embodied game environments in Unreal Engine and generating biological music signatures with a Commodore 64.

We also tried to “close the loop” between moving bodies and a living network of cultured neurons, where audience members’ movement patterns are translated into custom stimulation patterns sent to the CL1.

Closed Loop Connections was the first stab at connecting the CL1 to a range of expansive creative technology applications using living neurons as real-time processors…We also tried to “close the loop” between moving bodies and a living network of cultured neurons.

In response to the movement data, the neurons produce spiking activity as they process and adapt to these inputs. The CL1 streams this raw neural activity data in real time via User Datagram Protocol (UDP) to Unreal Engine as a generative source of a visual narrative.

For Actual Intelligence, we adapted and further developed this experience into a performance where the CL1 was connected to a Commodore 64 to generate real-time music signatures from neuronal activity as a biological audio synthesiser. Thanks to NCM Studio’s Cameron Holman we were able to make this dream come true.

There’s a level of mutual embodying from humans to the living cells in a dish and vice versa, which opens up to such fun and complex understanding of post-human interactions in the synthetic bioengineered intelligence space!

JW: Brett, biological neural systems like those in the CL1 have been shown to learn to play games and adapt to stimuli. Can you talk through examples from your work and how you see this relating to broader conversations about learning, problem-solving or creativity in machines?

BK: One of the most fascinating things we’ve seen in the lab at Cortical Labs is that living neural networks can learn from interaction with an environment, even when they’re grown outside the body. That idea moved from theory into something tangible in our early experiments, where neurons learned to play a simplified version of the classic game Pong.

In that work, neurons grown on multi-electrode arrays were connected to a simulated game environment. The electrodes allowed the system to send signals representing the game state—like where the ball was—to the neural network, and then read the neurons’ activity back as actions, such as moving a paddle up or down. The key element was feedback. When the neurons produced activity that led to a successful outcome, the sensory signals remained predictable and stable. When they failed, the system delivered more unpredictable input. Over time, the neurons reorganised their activity patterns to minimise that unpredictability, and the network became better at controlling the paddle.

What’s remarkable is that this behaviour emerges from basic biological learning mechanisms, rather than being programmed in the way artificial neural networks are. The neurons are constantly rewiring and adjusting their connections through plasticity. In other words, the system learns because biological networks naturally try to organise themselves in ways that make their environment more predictable.

That’s where the broader implications become interesting. In AI, learning is usually driven by algorithms such as reinforcement learning, where rewards and penalties are mathematically defined. In biological systems, learning seems to arise from more general principles—like adapting to sensory feedback and maintaining stable internal dynamics. Our work suggests that even relatively small neural cultures can demonstrate these principles when they’re embedded in an environment where their actions matter.

Looking forward, platforms like the CL1 allow us to explore these behaviours in much more controlled ways. We can test how biological networks learn, how drugs alter that learning, and how neural systems solve simple problems through adaptation. That doesn’t mean these systems are “creative” in the human sense, but they do show something powerful: intelligence can emerge from living neural tissue interacting with a world.

For the broader conversation about machine intelligence, it hints at a third path. Instead of purely biological brains or purely digital AI, we may end up with hybrid systems that combine the efficiency and adaptability of biology with the reliability of electronics. That could give us entirely new ways to study learning, and potentially build machines that approach problem-solving in ways closer to how living systems do it.

JW: Jenn, what role do you see artists playing in shaping the future of SBI? And what about people without scientific backgrounds more broadly?

JL: I find it such an incredible experience collaborating with Cortical Labs and more expansively between arts and sciences. Scientists are among some of the most open-minded researchers/creators/developers. They’re often some of the most creative people I know too, while providing so much surface area for design.

For artists, perhaps their main role in this is to develop engaging narratives that can help translate the rigorous research through an experiential way. I’ve personally kind of moved away from developing work that is purely visual, to considering more embedded interaction design in this project—hopefully the focus on experience resonates with people who are normally less exposed to this type of scientific research and has provided an alternative way to learn about science!

BK: Artists and non-scientists are essential to shaping where SBI goes next.

One of the lessons we’ve learned at Cortical Labs is that new technologies don’t evolve only in laboratories. They evolve through the way people interpret, question, and interact with them. Artists are uniquely good at that. They often approach systems like SBI without the assumptions scientists bring, which means they ask very different questions. Sometimes those questions reveal possibilities that researchers hadn’t considered—whether that’s new forms of interaction, new ways of visualising neural activity, or new ways of thinking about what intelligence even means.

Artists also play an important role in making the technology legible. Growing neurons on silicon and letting them interact with digital environments can sound abstract or even a bit science-fictional. Artistic collaborations can turn those ideas into experiences people can see, feel, and explore. That kind of translation is incredibly valuable because it allows the public to engage with the science directly rather than just hearing about it in technical language.

Artists also play an important role in making the technology legible. Growing neurons on silicon and letting them interact with digital environments can sound abstract or even a bit science-fictional. Artistic collaborations can turn those ideas into experiences people can see, feel, and explore.

JW: Jenn, embodiment is a core theme of the exhibition FRIEND. How do you see questions of embodiment intersecting with biocomputing and how might that change how you think about intelligence, learning or care?

JL: One of the major tangents of biocomputing in relation to embodiment is the possibility of training living cells to learn new contexts and thereby embodying new environments. By translating a virtual game environment and discretising the space and tasks into electrical signals, we are also training the living cells to physically embody the space through electrical communication.

As the Microelectrode Array (MEA) interface stimulates and simulates an environment, we are also “giving the organoids something to think about—something to be or do,” as my collaborator Chloe Loewith says.

Thinking about reservoir computing has been a significant shift in my understanding of intelligence, in that we can do computation on physical systems by studying its inputs and outputs— in this case, we are really treating the pool of living neurons as a physical system with neuroplasticity. The fact that this physical system can grow and learn to do things is pretty incredible.

JW: Brett, I’m interested in how you both approach questions of access and agency. The Cortical Cloud provides user access to biocomputing without the need to maintain specialised equipment. How do questions of access shape your work at Cortical Labs? How do we keep systems accessible in a climate of increasing platform enclosure or “enshittification”?

BK: Access is actually one of the central motivations behind what we’re building at Cortical Labs.

Historically, studying living neural systems has required highly specialised laboratories, expensive equipment, and years of technical training just to maintain the biological cultures. That means only a relatively small number of institutions in the world can participate. One of the ideas behind the Cortical Cloud and the CL1 platform is to lower that barrier dramatically. Instead of needing to build a full wet lab, researchers, students, artists, or developers can interact with living neural networks remotely—sending inputs, receiving neural activity, and designing experiments through software.

Ultimately, the most exciting future for SBI is one where it isn’t limited to a handful of well-funded labs. If platforms like Cortical Cloud succeed, we could move toward a world where interacting with living neural systems becomes something that students, researchers, and creative practitioners around the world can participate in, like what Jenn is doing at NCM! And when more people can explore a new kind of intelligence, the pace and diversity of discovery tends to accelerate in ways that no single institution could plan on its own.

JW: Jenn, can you talk about Assembloid Agency, and how you’d like to see it evolve in the future?

JL: I foresee that this new era of computing will give rise to many different configurations of physical and neural assemblies, driven by advances in MEA design, bioprinting technologies, and microfluidic platforms. For me, it may be useful to consider how games can help benchmark different behaviours and performance across different pools of neurons. I am particularly interested in deepening my research into multiplayer games for living neurons, as well as exploring communication between different scales and pools of neuronal culture.

JW: Brett, beyond medicine or neuroscience studies, what kinds of applications do you see for SBI that might surprise people? How could this technology reshape broader systems, including scientific, cultural, industrial or ecological?

BK: Medicine or neuroscience research are only the first obvious applications. The deeper opportunity is that we’re learning how to work with a new kind of information-processing substrate: living neural systems that can adapt, learn, and solve problems with extraordinary efficiency. And once you think of it that way, the possible applications start to become much broader—sometimes in ways that surprise people.

One area that I think will be transformative is computing itself. Modern AI systems are incredibly powerful, but they are also extremely energy-hungry. Training large models can consume enormous amounts of data and electricity. Biological neural systems, by contrast, can learn with very few examples and very little energy.

Another direction is scientific discovery itself. Platforms like the CL1 let researchers interact with living neural networks in real time in a closed-loop environment. As I mentioned previously, this opens access to a range of individuals from both science and non-science backgrounds to interface with this scientific tool, and who knows what they can find from here!

There are also potential industrial applications that may sound like science fiction now, but could be unlocked by this technology. Biological neural systems are remarkably good at solving noisy, uncertain problems — the kinds of problems that appear in logistics, adaptive control systems, and complex environments. There may be ways to use these systems in such applications to enable a more effective way to navigate environments, or adaptive devices.

JW: What would you both like people to take away from encountering this exhibit in the gallery?

BK: What I’d really like people to take away is a sense of wonder and curiosity about intelligence itself. We’re so used to thinking about intelligence as something locked inside our heads or inside computers that we rarely stop and ask what it actually is. When people encounter this exhibit, they’re seeing a very simple neural system interacting with the world in real time, learning from feedback, and adapting. In a way, it strips intelligence back to its fundamentals and lets us observe it emerging from living cells.

I also hope people walk away realising that science isn’t some distant, abstract activity that only happens in specialised labs. Technologies like the systems we’re developing at Cortical Labs are beginning to make it possible to interact with biological neural networks in entirely new ways.

Cortical Labs

Cortical Labs is an Australian biotechnology company based in Melbourne that develops platforms for studying and harnessing biological intelligence. The company focuses on creating systems where living neurons—typically derived from human induced pluripotent stem cells (iPSCs)—are interfaced with computing hardware so they can sense, process information, and interact with digital environments in real time.

About

Dr Brett Kagan

Dr Brett Kagan is the Chief Scientific Officer (CSO) and Chief Operations Officer (COO) at Cortical Labs. His research focuses on understanding how intelligence emerges from biological neural networks and how these systems can be harnessed as a new form of computing known as Synthetic Biological Intelligence (SBI).

Trained as a neuroscientist, Kagan’s work sits at the intersection of neuroscience, bioengineering, and artificial intelligence. He has led pioneering experiments demonstrating that networks of neurons grown on microelectrode arrays can interact with digital environments and learn simple tasks through feedback. These experiments provided some of the first demonstrations of SBI, showing how living neural networks can adapt their activity to process information and improve performance over time.

At Cortical Labs, Kagan leads the scientific development of platforms such as CL1, which integrates cell culture systems, neural recording and stimulation hardware, and software tools that allow researchers to study and interact with living neural networks in real time.

His work contributes to the emerging fields of biological computing and organoid intelligence, with the goal of developing new tools for neuroscience research, accelerating drug discovery, and exploring how biological systems can be used as adaptive computational substrates.

Jenn Leung

Jenn Leung is a creative technologist and simulation developer building game engine simulations and real-time streaming tools. Currently her research focuses on developing UE interfaces for living neurons and agent behaviour simulation, with two papers recently published in the MIT Antikythera Journal and a paper on UE-API for brain-on-a-chip platforms presented at NeurIPS 2025.

She is a Senior Lecturer in Creative Technology & Design at University of the Arts London, a researcher at LifeFabs Institute, and a Visiting Researcher at The Bartlett School of Architecture, UCL, working on the 100 Minds in Motion project combining EEG, eye-tracking, and movement data in an agent simulation. She was previously a studio researcher at Antikythera's Cognitive Infrastructures Studio in 2024, supported by the Berggruen Institute.

Her work has been exhibited at Epic Games Innovation Lab, Ars Electronica, Medialab Matadero, W1 Curates, Tai Kwun Hong Kong, National Communication Museum (Australia), CIVA Festival, DAE Research Festival, PAF Olomouc, ALife Conference Kyoto,Aksioma, and Museum of Art in Public Spaces (Køge) among others, and was featured on Dazed, TANK Magazine, DIS, SHOWStudio, Art Asia Pacific, COEVAL Magazine, and AQNB.

In collaboration with Daniel Felstead, she has produced a short film series ‘I’m so Janky’ from DIS that explore the myths, ideologies and realities of the metaverse, AI, and Neuralink. She also collaborates with dmstfctn on simulation projects for Serpentine Arts Technologies and the Leonardo Supercomputer at Bologna’s Tecnopolo.

Jemimah Widdicombe

Jemimah Widdicombe leads the development of temporary exhibitions and acquisitions as Senior Curator at the National Communication Museum (NCM) in Naarm/Melbourne, Australia. Her work focuses on the intersection of culture and technology, and is underpinned by foundations in human interaction research and design and cultural production.

Jemimah is curator of the exhibitions FRIEND, Instruments of Surveillance and co-curator of the NCM permanent galleries. Recent publications include un Magazine, The Conversation and PRISM. Her curatorial career spans over a decade of interdisciplinary projects, including strategic exhibitions, collections and content roles at Museums Victoria and the University of Melbourne, coupled with extensive experience in digital research, education and cultural program coordination in Kanaky New Caledonia and France.